A recently published research paper introduces new and easy way to edit pictures, by simple ‘click and dragging’ method using the latest generative AI technology.

Remember when editing pictures in Adobe photoshop was a skill? It’s no longer now, as AI lets everyone to edit photos as they wanted in seconds by a simple click and drag. This recent development in photo-editing could even surpass text-to-image generator platforms, as it poses significant potential in allowing even a noob to edit like a pro.

DragGAN is the new AI editing tool developed by group of researchers from a couple of universities and research centers, including MIT and Google.

DragGAN – AI Editing tool

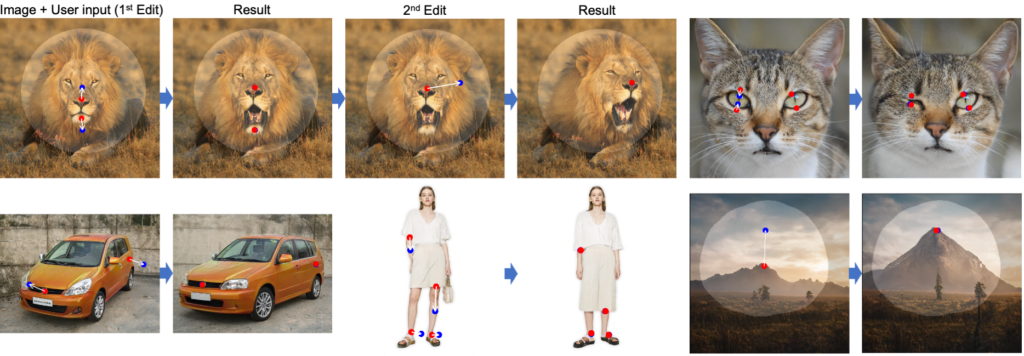

Allowing users for an alternative and effortless way to edit photos, DragGAN equips AI called generative adversarial networks (GAN) in a user-interactive manner. This makes DragGAN unique among other AI-image-generating models such as midjourney, Dall-E2, and more, in a way that lets users to edit images by dragging any points of the image to another target point. On the other hand, conventional GAN models use manually annotated training data or a prior 3D model, like you can get only a related image that’s based on already existing trained data.

Users who experimented text-to-image generators might have felt this. You type in a command or prompt descriptive and clear enough, but you are provided with something that doesn’t match with the imaginary picture you thought. Also, it’s quite challenging to edit or upscale the picture in those platforms.

DragGAN AI tool aims to solve this by giving users full flexibility, precision and generality.

In a series of videos provided along with the research-paper, researchers had shown how easy it would be to try this point-to-point drag method for editing pictures.

Related Posts

How it works?

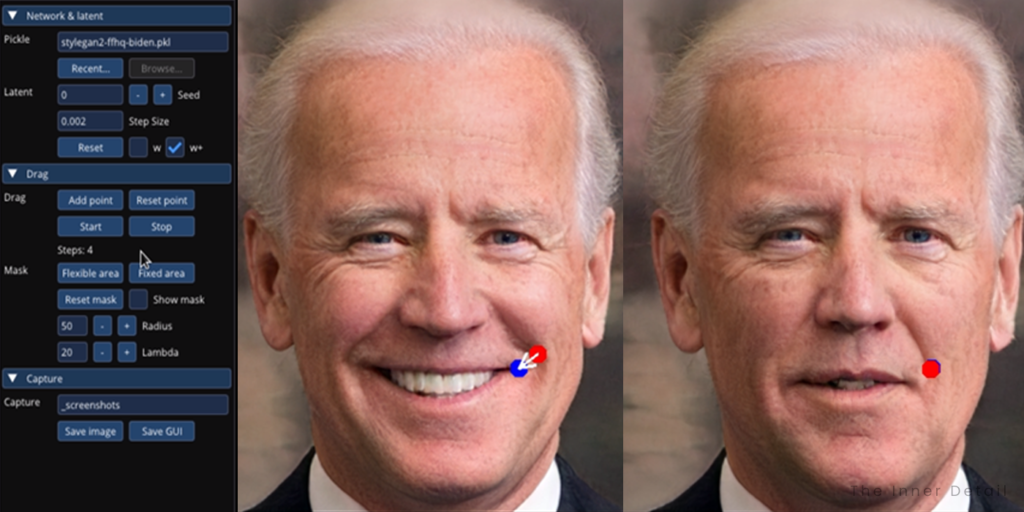

Editing a picture using DragGAN involves two simple steps. A point has to be placed on that part of an image where you need to edit. Then place another point as target, which accordingly brings the first point to the target point and manipulates the picture for a more user-oriented yet realistic look. Photoshop is at its simplest.

“The tool consists of two components: 1) a feature-based motion supervision that drives the handle point to move towards the target position, and 2) a new point tracking approach that leverages the discriminative GAN features to keep localizing the position of the handle points,” writes the research-paper.

For example, in this example of editing Biden’s face, a point is placed in Biden’s smile-curve in his cheek, and another target point at the end of his mouth. In a couple of seconds, Biden stopped smiling and his smile-curve disappeared, rendering an edit that would have taken minutes or even hours if done in a regular photoshop.

And it doesn’t stop there. Locating the point on Biden’s nose and placing target point few millimeters away, the viewing angle of his entire face changes correspondingly. Biden then gets more hair on his head, by just another few clicks. It’s really amazing!

“Through DragGAN, anyone can deform an image with precise control over where pixels go, thus manipulating the pose, shape, expression, and layout of diverse categories such as animals, cars, humans, landscapes, etc.”

DragGAN might be groundbreaking surpassing Adobe photoshop, and uplifting the photo-editing process hugely, saving much of the time and effort, if released.

The project was collaboratively made by researchers from Max Planck Institute for Informatics, Saarbrucken Research Center for Visual computing, MIT, University of Pennsylvania and Google.

(For more such interesting informational, technology and innovation stuffs, keep reading The Inner Detail).