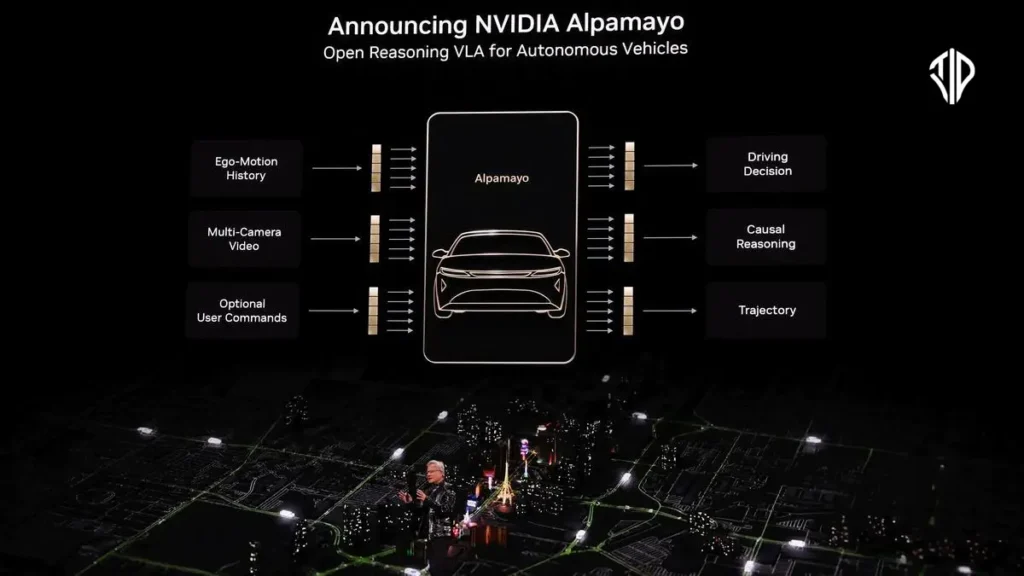

NVIDIA has recently unveiled its Alpamayo family of open-source AI models and tools, marking a significant stride in accelerating the development of safe, reasoning-based autonomous vehicles. This groundbreaking suite is poised to transform how self-driving systems perceive, reason, and act in the complex real world, addressing some of the industry’s most persistent challenges.

The journey towards fully autonomous vehicles, often referred to as Level 4 deployment, has been fraught with challenges, particularly when confronting the “long tail” of rare and complex driving scenarios. Traditional autonomous vehicle architectures, which often separate perception from planning, struggle to scale effectively when faced with unusual or unforeseen situations.

While end-to-end learning has shown promise, truly overcoming these edge cases demands a deeper level of intelligence: the ability to reason about cause and effect, especially when circumstances fall outside a model’s trained experience. This is precisely where NVIDIA‘s Alpamayo family steps in, introducing a humanlike capacity for thoughtful judgment.

Human-like AI Reasoning in Autonomous Cars

At the heart of the Alpamayo family are its innovative chain-of-thought, reasoning-based vision language action (VLA) models. These systems are designed to emulate human thinking, processing novel or rare scenarios step by step.

This capability not only enhances driving performance but also provides critical explainability, allowing developers to understand the logic behind an autonomous vehicle’s decisions.

Such transparency is indispensable for building trust and scaling safety in intelligent vehicles. Jensen Huang, founder and CEO of NVIDIA, aptly stated, “The ChatGPT moment for physical AI is here — when machines begin to understand, reason and act in the real world… Alpamayo brings reasoning to autonomous vehicles, allowing them to think through rare scenarios, drive safely in complex environments and explain their driving decisions — it’s the foundation for safe, scalable autonomy.”

Components of Alpamayo AI

The Alpamayo family is more than just a single model; it’s a comprehensive, open ecosystem integrating three foundational pillars designed for automotive developers and the research community. These models primarily serve as large-scale teacher models, which developers can then fine-tune and distill into their specific autonomous vehicle stacks.

The key components include:

- Alpamayo 1: This is the industry’s first chain-of-thought reasoning VLA model developed specifically for the autonomous vehicle research community. Available on Hugging Face, Alpamayo 1 boasts a 10-billion-parameter architecture that takes video input and generates not only driving trajectories but also the reasoning traces — essentially, the logical steps behind each decision. Developers can adapt this powerful model to create smaller, runtime-optimized versions for vehicles or use it as a basis for advanced tools like reasoning-based evaluators and auto-labeling systems. NVIDIA has released Alpamayo 1 with open model weights and open-source inferencing scripts, fostering collaborative innovation.

- AlpaSim: To complement the models, NVIDIA also introduced AlpaSim, a fully open-source, end-to-end simulation framework for high-fidelity autonomous vehicle development. Accessible on GitHub, AlpaSim provides realistic sensor modeling, configurable traffic dynamics, and scalable closed-loop testing environments. This allows for rapid validation of autonomous policies and their refinement in a safe, virtual setting before real-world deployment.

- Physical AI Open Datasets: Understanding that robust AI requires rich data, NVIDIA is offering one of the most diverse and extensive large-scale open datasets for autonomous vehicles. Comprising over 1,700 hours of driving data, collected across a wide array of geographies and conditions, these datasets are crucial for training and advancing reasoning architectures, especially in tackling those elusive long-tail edge cases. These invaluable datasets are also available on Hugging Face.

Together, these pillars create a self-reinforcing development loop, enabling a new paradigm for building sophisticated, reasoning-based autonomous vehicle stacks.

Industry Support and Future Implications

The unveiling of Alpamayo has garnered significant interest from mobility leaders and research institutions alike, including Lucid, JLR, Uber, and Berkeley DeepDrive.

These industry experts recognize Alpamayo’s potential to accelerate their Level 4 autonomy roadmaps by providing tools that enhance transparency, improve decision-making in complex environments, and ultimately, bolster safety.

As Kai Stepper, vice president of ADAS and autonomous driving at Lucid Motors, highlighted, “The shift toward physical AI highlights the growing need for AI systems that can reason about real-world behavior, not just process data.”

Beyond the Alpamayo family, developers can further leverage NVIDIA’s extensive library of tools and platforms, such as NVIDIA Cosmos and NVIDIA Omniverse, and integrate these models into architectures like NVIDIA DRIVE Hyperion built with NVIDIA DRIVE AGX Thor accelerated compute.

This holistic approach ensures that innovation in autonomous driving continues to push boundaries, paving the way for a safer, more intelligent future of mobility.

Key Takeaways

- NVIDIA’s Alpamayo family introduces open-source AI models and tools aimed at accelerating safe, reasoning-based autonomous vehicles.

- The core innovation is chain-of-thought, reasoning-based VLA models (like Alpamayo 1) that emulate human thinking for complex driving scenarios, enhancing performance and explainability.

- The Alpamayo ecosystem comprises Alpamayo 1 (a 10-billion-parameter VLA model with reasoning traces), AlpaSim (an open-source simulation framework), and extensive Physical AI Open Datasets (1,700+ hours of driving data).

- These tools are designed to address “long tail” edge cases in Level 4 autonomous deployment by enabling vehicles to reason about cause and effect.

- Industry leaders and research institutions, including Lucid, JLR, Uber, and Berkeley DeepDrive, support Alpamayo’s potential to enhance transparency, improve decision-making, and bolster safety in autonomous driving.

Join our community by subscribing to our Weekly Newsletter to stay updated on the latest AI updates and technologies, including the tips and how-to guides. (Also, follow us on Instagram (@tid_technology) for more updates in your feed).

(For more such interesting informational, technology and innovation stuffs, keep reading The Inner Detail).