Amazon announces its new AI chips – Trainium2 and Graviton4 for its amazon web services customers and an AI chatbot called “Q” for businesses and enterprises.

The world’s leading cloud computing provider – Amazon Web Services (AWS) steps into something big to stay on the trend and to extend its market share. AWS already dominates the cloud market with 32% and the recent announcement of its AI chip and its collaboration with Nvidia might help to boost its business considerably.

AWS will host a special computing cluster for customers where they can utilize AI chips from the firm and also access Nvidia’s next-generation H200 Tensor Core graphics processing units (GPU).

AWS’ AI Chips

Amazon Web Services (AWS) announced two next generation advanced AI chips – Graviton4 and Trainium2 for its cloud service business. The chips deliver premium performance and are energy efficient for a broad range of customer workloads, including machine learning (ML) training and general artificial intelligence applications.

While Graviton4 AI chip aids customers in cloud computing services, Trainium2 is designed for training large AI models.

“AWS Graviton4 is the most powerful and energy efficient AWS processor to date for a broad range of cloud workloads. AWS Trainium2 will power the highest performance compute on AWS for training foundation models faster and at a lower cost, while using less energy,” writes Amazon.

Graviton4 provides up to 30% better compute performance, 50% more cores and 75% more memory bandwidth than its predecessor Graviton3 processors, delivering the best price performance and energy efficiency for workloads running on Amazon EC2. More than 50,000 AWS customers are already using Graviton chips, Amazon said.

Trainium2 will deliver 4x faster training than its predecessor and will help training large language models (LLMs) in a fraction of the time, and improve energy efficiency up to 2x.

AWS and Nvidia

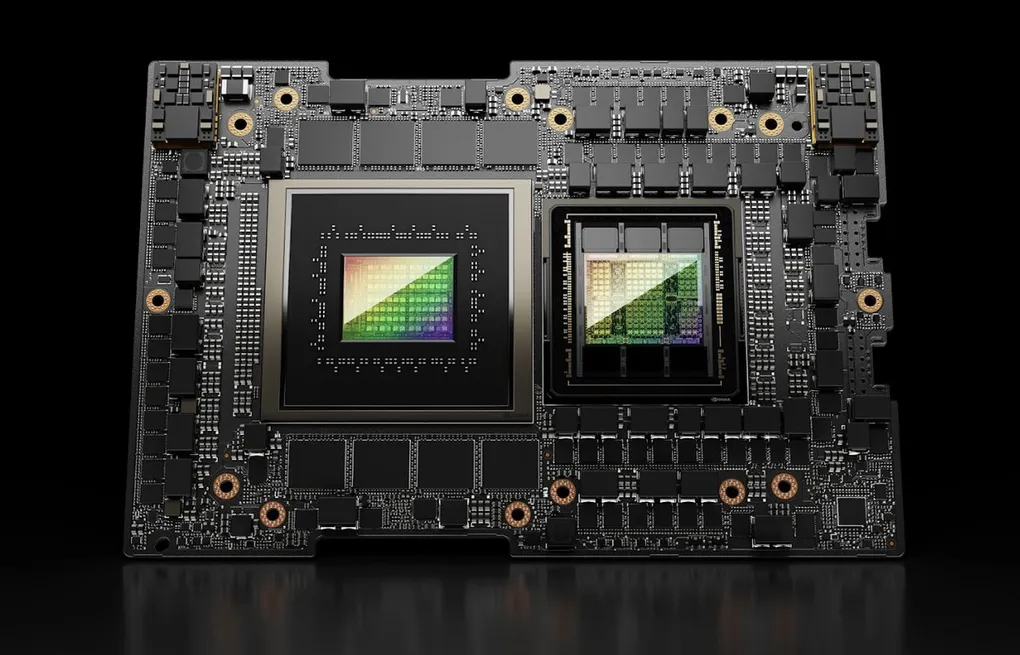

AWS collaborates with Nvidia, offering access to Nvidia’s latest H200 AI GPU, which is capable of generating output nearly twice as fast as its previous H100, the chip used by OpenAI to train its GPT-4.

AWS said it will operate more than 16,000 Nvidia GH200 Grace Hopper Superchips, which contain Nvidia’s GPUs and Arm-based general-purpose processors. (Arm is a leading semiconductor firm that supplies chips to big players like Google, Apple.)

Nvidia sees spiking demand for its AI chips since the onset of ChatGPT, that it even led to shortage of Nvidia’s chips as companies gallops to buy chips for incorporating the technology into their business.

Amazon and Nvidia also merge for DGX Cloud Project Ceiba, which they describe as the world’s fastest AI supercomputer.

Amazon Q

AI race is real. Every big-tech firm starts making their own AI chatbot and in the go, Amazon joins the race. Amazon recently launched its new artificial intelligence assistant “Amazon Q”.

Amazon Q aims to help employees with daily tasks, such as summarizing strategy documents, filling out internal support tickets and answering questions about company policy. Yes, Amazon Q is only for workplaces – businesses and enterprises and not for public.

However, Amazon is working on an AI project “Nile”, that will make in-search feature of its website AI-powered, fetching you apt and quick results.

Amazon Q will compete with Google’s Duet AI, OpenAI’s ChatGPT Enterprise and Copilot. “We think Q has the potential to become a work companion for millions and millions of people in their work life,” Adam Selipsky, the Chief Executive of Amazon Web Services, said in an interview.

Amazon Q is not built on a specific AI model, but uses an Amazon platform known as Bedrock, which connects several AI systems together, including Amazon’s own Titan as well as ones developed by Anthropic and Meta.

Safety is primary

With its AWS, Amazon already has vast database of its customers and Q will serve those customers aka businesses’ employees. Companies were interested in using chatbots, only if it could assure safety and security for hoards of corporate data that the chatbot would be trained and involved with. And Q is specially built to be more secure and private than a consumer chatbot, Selipsky said.

Q indeed has restrictions based on departments within the company itself. For example, a marketing employee in a company may not have access to sensitive financial forecasts.

Pricing for Amazon Q starts at $20 per user each month. Microsoft and Google both charge $30 a month for each user of the enterprise chatbots that work with their email and other productivity applications.

(For more such interesting informational, technology and innovation stuffs, keep reading The Inner Detail).

Kindly add ‘The Inner Detail’ to your Google News Feed by following us!