The rapid ascent of Artificial Intelligence (AI) has brought forth incredible innovations, from chatbots that can mimic human conversation to image generators that conjure breathtaking art from a few words. Yet, beneath the veneer of technological marvel, a fierce legal battle is raging – one that pits AI giants against a multitude of creators over the very fuel that powers these systems: copyrighted content.

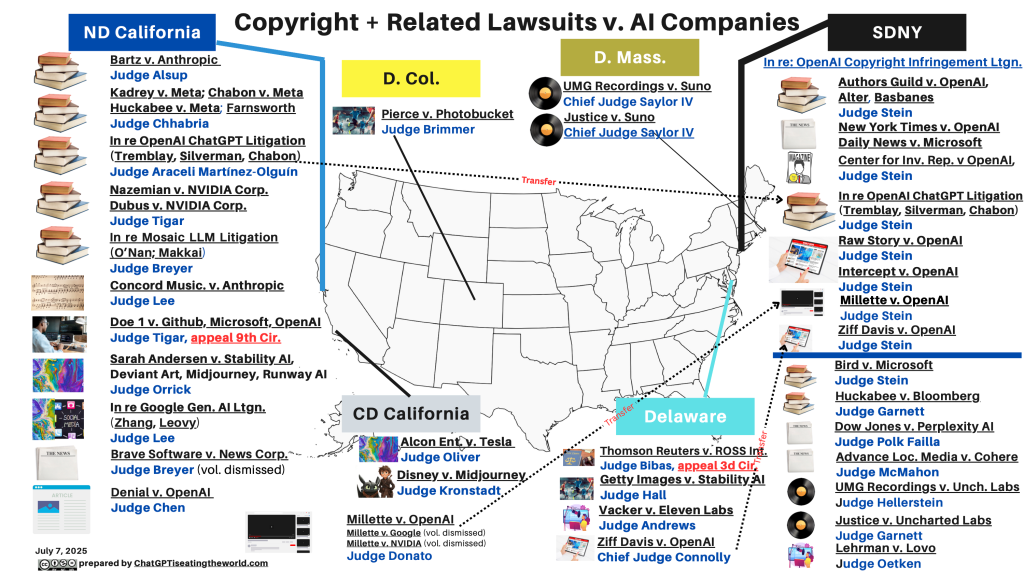

With over 50 copyright lawsuits filed against AI companies in the U.S. alone as of July 2025, and many more globally, this “wider legal war” is shaping the future of digital creation and compensation.

Why Are AI Companies Being Sued for Copyright Infringement?

At its core, the dispute stems from how generative AI models are built. These sophisticated systems learn by ingesting vast quantities of data. Automated bots, known as AI crawlers, indiscriminately scrape colossal amounts of information from the internet, including copyrighted articles, images, texts, and music, without explicit permission from their creators. This “free data lunch,” as some plaintiffs call it, has enabled AI systems to become powerful, but it has also led to a significant legal backlash.

Authors, artists, musicians, and major media organizations are deeply concerned that AI is “stealing” their work to produce new content, often without compensation or attribution. While AI companies require massive amounts of high-quality, human-created content to make their models more coherent and human-like, negotiating and paying creators for access can be costly and time-consuming. This has often led companies to bypass traditional licensing, sometimes even relying on “shadow libraries” (databases of pirated materials) for training data.

Key concerns driving these lawsuits include:

- Unauthorized Copying: The very act of copying copyrighted material to create training datasets is seen as infringement.

- Market Dilution: Creators fear that AI will “flood the market” with machine-written or generated content that is similar to, or derived from, their original works, thereby devaluing their profession.

- The “Black Box” Problem: Many generative AI systems are considered “black boxes,” meaning even their programmers don’t fully understand the precise internal steps that lead from input to output. This opacity makes it challenging to determine exactly how copyrighted works are being used and if the outputs are direct infringements.

- Misuse of Information: Allegations also extend to violations of copyright management information (such as watermarks) and privacy claims regarding personal data used for training.

The Front Lines: Key Lawsuits Against Tech Giants

The legal landscape is dotted with numerous high-profile cases targeting some of the biggest names in AI:

- Anthropic’s Landmark Settlement: Anthropic, maker of the Claude chatbot, faced a class-action lawsuit from authors like Andrea Bartz, Kirk Wallace Johnson, and Charles Graeber, who alleged their books were used without permission to train its AI. While a judge initially ruled that using books for AI training was “exceedingly transformative” under fair use, he ordered Anthropic to stand trial over its use of pirated material. In a significant development, Anthropic agreed to pay $1.5 billion to settle this lawsuit, acknowledging its use of more than seven million pirated books. This is poised to be the largest publicly-reported copyright recovery in history and sets a major precedent for future cases. Anthropic also faces a lawsuit from music publishers, led by Universal Music Group, concerning the alleged infringement of musical works and lyrics.

- OpenAI and Microsoft’s Battle with The New York Times: One of the most closely watched cases is The New York Times Co. v. Microsoft Corp., OpenAI. The Times is suing both companies for copyright infringement, alleging that millions of its articles were used to train OpenAI’s chatbot and other technologies. The lawsuit claims that these AI models now compete directly with the Times as a source of reliable information and can even mimic its style and recite content verbatim. Several other newspaper groups have also filed similar lawsuits against OpenAI and Microsoft. Separately, authors Paul Tremblay and Mona Awad, along with Sarah Silverman and others, are suing OpenAI, claiming their books were used from illegal “shadow libraries” to train GPT models, which can then produce detailed summaries of their works.

- Meta’s Standoff with Authors: Meta, the parent company of Instagram, has faced multiple lawsuits, including from authors like Richard Kadrey and Sarah Silverman, who alleged their books were used without permission from pirated databases (like “Books3” from Bibliotik) to train Meta’s LLaMA AI program. Although a judge granted Meta’s motion for partial summary judgment on fair use in one of these cases, the broader legal questions remain.

- Midjourney’s Iconic Character Clash: AI image generator Midjourney is being sued by Disney and NBCUniversal, who allege that the company’s AI models made illegal use of their iconic characters, such as Darth Vader and the Simpson family. Experts suggest that cases involving images might be “more favorable to copyright holders” if the AI models are found to be producing images identical to the copyrighted training data.

- Apple’s Scraped Novels Allegation: Just before its iPhone 17 event, Apple was hit with a copyright lawsuit by authors Grady Hendrix and Jennifer Roberson. They allege that Apple used a software program called “Applebot” to scrape data from “shadow libraries” like Books3, containing their pirated novels, to train its AI without their consent or compensation.

- Google’s Diverse Data Battle: Google also faces class-action lawsuits alleging the misuse of personal information and copyright infringement. This includes claims about the use of photos from dating websites, Spotify playlists, TikTok videos, and books to train its Bard AI (now Gemini). Visual artists also sued Google’s Imagen text-to-image generator for using their works without permission.

Solution to AI Copyright issues

The proliferation of these lawsuits underscores the urgent need for a clear framework that balances AI innovation with creators’ rights. Several potential solutions and guiding principles are emerging:

- Establishing Compensation Models: The Anthropic settlement sets a powerful precedent, indicating that AI companies will likely need to pay copyright owners for the use of their content. This could encourage more cooperation between AI developers and creators, recognizing the value of human-created data.

- Rethinking Fair Use: The legal concept of “fair use,” which allows limited use of copyrighted material without permission, is at the heart of many defenses. However, judges are grappling with how fair-use analysis applies to different media types (text, images, audio), especially when AI models might produce content identical to or directly competitive with original works.

- Creator Control and Opt-Outs: There is a growing demand for creators to have the ability to opt out of having their work used to train AI systems. Technologies like Cloudflare’s “pay-per-crawl” model offer website owners the option to block AI crawlers or charge for access, providing a potential pathway for creators to control and monetize their data.

- Legal Clarity: These ongoing cases are critical for answering fundamental questions: Does training an AI model on copyrighted material require a license? Does AI output infringe on copyright? Who is liable when AI infringes? Do AI models violate restrictions on copyright management information (like watermarks)? And does generating work in someone’s style violate their rights of publicity? The outcomes will provide much-needed guidance for both AI companies and content creators.

The Evolving Landscape of Content and AI

The sheer volume and diversity of these lawsuits—spanning written works, images, videos, audio, code, and even voice recordings—highlight that this isn’t a niche legal issue, but a foundational challenge for the AI era. The stakes are high: on one side, tech companies championing innovation and the transformative potential of AI; on the other, creators fighting for their livelihoods and the protection of intellectual property built over generations.

As these legal battles unfold, they will undoubtedly shape the future relationship between technology and creativity, pushing toward a future where AI systems are not only intelligent but also ethically accountable and respectful of the human ingenuity they rely upon.

Key Takeaways

- AI companies face increasing copyright lawsuits over the use of copyrighted material for training AI models.

- Key issues include unauthorized copying, market dilution, the “black box” problem, and misuse of copyright management information.

- Landmark cases like Anthropic’s settlement and The New York Times’ lawsuit against OpenAI and Microsoft are shaping legal precedents.

- Potential solutions involve establishing compensation models, rethinking fair use, and providing creators with control and opt-out options.

Join our community by subscribing to our Weekly Newsletter to stay updated on the latest AI updates and technologies, including the tips and how-to guides. (Also, follow us on Instagram (@inner_detail) for more updates in your feed).

(For more such interesting informational, technology and innovation stuffs, keep reading The Inner Detail).

It’s appropriate time to make a few plans for the longer term and it’s time to be happy. I’ve learn this publish and if I could I wish to suggest you few interesting issues or tips. Perhaps you can write subsequent articles regarding this article. I desire to learn even more issues approximately it!