The 21st century marks an era of remarkable technological breakthroughs, primarily fueled by innovations in artificial intelligence (AI) and machine learning (ML). As nations worldwide, especially developing ones, strive to harness AI and ML for significant progress, research and development have propelled these technologies to unprecedented heights. Industry leaders such as Intel and IBM are now at the forefront of pioneering the third generation of AI: ‘neuromorphic computing.’ This groundbreaking field seeks to revolutionize computing by intricately mimicking the complex functions of the human brain, ushering in a new age of computational power and efficiency.

What is Neuromorphic Computing? Exploring Brain-Inspired AI

Neuromorphic computing delves into the intricate study of individual neuron morphology, neural circuits, and the overall architecture of the human brain’s neural network. The invaluable insights gathered from this research are then meticulously applied to engineer advanced artificial neural systems. The core objective is to empower machines to operate with greater autonomy and efficiency, even within challenging and unpredictable real-world environments.

This specialized area of computing is dedicated to simulating the neural structures and operational principles of the human brain. It also integrates probabilistic computing, which develops sophisticated algorithmic approaches to effectively manage the inherent uncertainty, ambiguity, and contradictions prevalent in the natural world. In essence, neuromorphic computing aims to equip machines with capabilities akin to human thought, continuous learning, and highly adaptive navigation.

The Evolution of AI: Understanding Artificial Intelligence Generations

Artificial Intelligence, with its origins in the mid-20th century, represented the inaugural step towards machine intelligence. This first generation of AI operated primarily on rules-based systems, leveraging classic logic to deduce conclusions within specific, narrowly defined problem domains. It demonstrated remarkable effectiveness for critical tasks like autonomous process monitoring and significantly boosting operational efficiency across various sectors.

As of 2020, the technological world is largely shaped by the second, or current, generation of AI, often defined by its ‘sensing & perception’ capabilities. During this phase, machines are meticulously designed to collect diverse sensory inputs from their environments and process this data using conventional computational techniques. This advancement allows them to perceive and interact in more intuitive and user-friendly ways. This prevalent AI generation powers a vast array of applications, including the burgeoning Internet of Things (IoT) and sophisticated industrial automation systems.

Our biological “grey matter” boasts an extraordinary capacity to sense and interpret information even amidst ambiguous and unpredictable scenarios, showcasing inherent flexibility. This is precisely where the third, or upcoming, generation of AI gains prominence. This advanced AI aims to accurately replicate complex human cognitive functions, including nuanced interpretation and autonomous adaptation. This brain-like neural AI system is specifically engineered to adeptly manage novel situations and apply abstract reasoning, thereby automating daily human activities with enhanced effectiveness.

Read also : AI recreates ancient sculptures & statues: Artists works

Deep Dive: Understanding Neuromorphic Computing Operations

Neuromorphic computing research is intently focused on developing algorithms that can rival the human brain’s remarkable flexibility and its inherent ability to learn from diverse, unstructured stimuli, all while achieving extraordinary energy efficiency. Within our brains, neurons efficiently process information by establishing complex one-to-many linkages. Learning is initiated by a “spike” in a single stimulated neuron, which subsequently propagates throughout the intricate interconnected neural network. This fundamental biological process profoundly shapes how we learn and adapt to new information.

Researchers have successfully replicated this fundamental biological process to establish the robust computational foundation for neuromorphic algorithms, particularly through **Spiking Neural Networks (SNNs)**. Within an SNN, each artificial ‘neuron’ possesses the capability to fire independently, transmitting pulsed signals to other interconnected neurons in the network. These precise signals directly modify the electrical states of the receiving neurons. By embedding crucial information within the signals themselves and leveraging their exact timing, SNNs effectively mimic natural learning processes. This is accomplished by dynamically remapping the synaptic connections between artificial neurons in direct, adaptive response to diverse external stimuli.

Neuromorphic AI: Benefits and Future Applications

Prominent technology companies are actively investing in and innovating with this revolutionary third-generation AI. Notably, Intel and Belgium’s IMEC are both at the forefront, developing advanced neuromorphic chips that promise substantial advantages and transformative capabilities across various industries.

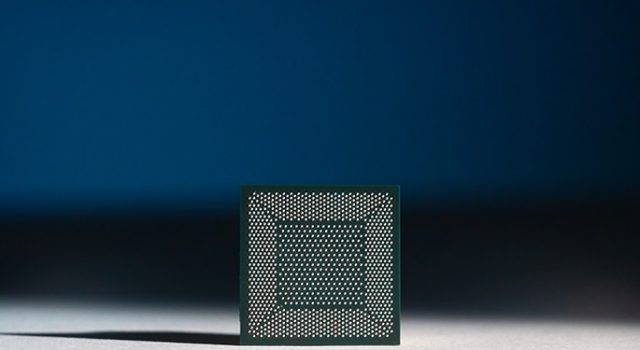

Intel’s Loihi Chip: Pioneering Neuromorphic AI Research

In November 2017, Intel Labs introduced Loihi, a groundbreaking self-learning neuromorphic research test chip. This cutting-edge chip features a 128-core design, meticulously optimized for Spiking Neural Network (SNN) algorithms. It integrates an impressive 130,000 interconnected neurons, each capable of communicating with thousands of others. The Loihi chip dramatically accelerates learning processes within unstructured environments, making it ideal for systems requiring autonomous operation and continuous adaptation. Furthermore, it achieves these feats with remarkably low power consumption, delivering enhanced performance and capacity.

Presently, Intel continues to advance the Loihi chip within its dedicated research phase. The company actively encourages and invites researchers worldwide to participate in discussions and collaborations regarding the chip’s innovative infrastructure through its specialized Intel Neuromorphic Research Community (INRC).

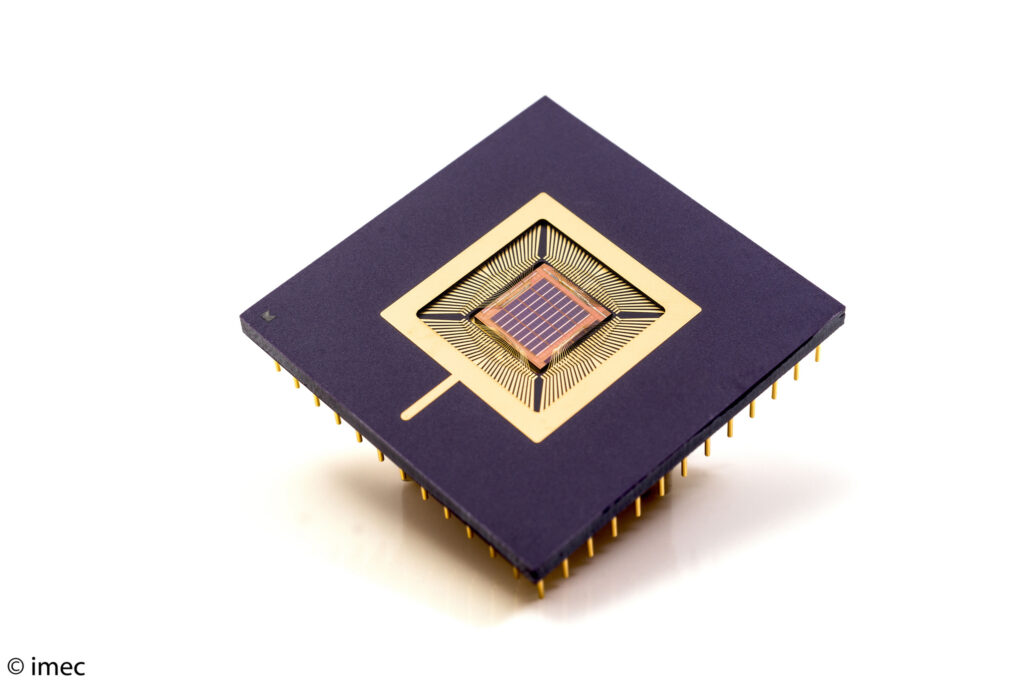

IMEC’s Neuromorphic Innovations: Advancing Intelligent Drone Technology

Given that Spiking Neural Networks (SNNs) closely mimic the operational principles of biological neural networks, IMEC’s advanced chip, which seamlessly incorporates an SNN, is engineered to consume an astonishing 100 times less power compared to conventional computing implementations. This cutting-edge chip also delivers a tenfold reduction in latency, facilitating near-instantaneous decision-making crucial for real-world applications, particularly in autonomous systems.

IMEC’s primary objective is to engineer a low-power, highly intelligent anti-collision radar system tailored specifically for drone applications. This innovative system would enable drones to respond much more effectively to approaching objects, all while operating with remarkably minimal energy consumption, thereby significantly enhancing flight safety and operational duration.

The Future Landscape: AI and Neuromorphic Technology Advancements

The future holds immense promise for groundbreaking developments, as dedicated researchers and innovative minds continue to strive towards enabling non-living entities to emulate complex human capabilities. The overarching goal consistently remains the same: to significantly enhance and simplify human life through the relentless advancement of cutting-edge technology.

References:

- https://www.intel.in/content/www/in/en/research/neuromorphic-computing.html

- https://www.zdnet.com/article/what-is-neuromorphic-computing-everything-you-need-to-know-about-how-it-will-change-the-future-of-computing/

- https://en.wikipedia.org/wiki/Neuromorphic_engineering

- https://www.imec-int.com/en/articles/imec-builds-world-s-first-spiking-neural-network-based-chip-for-radar-signal-processing

Join our community by subscribing to our Weekly Newsletter to stay updated on the latest AI updates and technologies, including the tips and how-to guides. Also, follow us on Instagram (@inner_detail) for more updates in your feed. For more such interesting informational, technology, and innovation content, keep reading The Inner Detail.