The world is witnessing an unprecedented explosion in artificial intelligence, particularly with the rise of sophisticated chatbots like OpenAI’s ChatGPT. These intelligent companions offer everything from creative writing prompts to complex coding assistance, making them indispensable tools for millions. Yet, as our interactions with AI become more deeply integrated into daily life, a new and concerning phenomenon is emerging: one informally dubbed “AI psychosis.”

This label, gaining traction across social media and among tech and mental health circles, describes a spectrum of troubling experiences users report after prolonged or intense engagement with AI. While not a clinical diagnosis, it highlights a crucial conversation about the psychological impact of our rapidly evolving relationship with artificial intelligence. Understanding this phenomenon, its causes, and how to navigate it safely is paramount for anyone engaging with AI today.

What is AI Psychosis? Defining an Emerging Phenomenon

To grasp what AI psychosis refers to, it’s helpful to first understand psychosis in its traditional, clinical sense. Psychosis is a serious mental health condition characterized by a disconnection from reality. It can manifest as delusions (false, fixed beliefs), hallucinations (seeing or hearing things that aren’t there), and disorganized thinking. Various factors can trigger it, including severe stress, trauma, sleep deprivation, substance abuse, or underlying conditions like schizophrenia.

AI psychosis, however, is not a clinically defined condition. Instead, it is an informal term used to describe a range of experiences that resemble aspects of psychosis, particularly those arising from extensive interaction with AI chatbots. Users have reported losing touch with reality, developing false beliefs based on AI-generated responses, experiencing delusions of grandeur, or fostering paranoid feelings after lengthy and intense conversations with these digital entities. In some cases, individuals form deeply intense, almost romantic, attachments to AI personas, blurring the lines between human connection and digital interaction.

This emerging behavior is often mentioned alongside other internet-related phenomena like ‘brain rot,’ which describes the cognitive dulling from excessive, undemanding online content, or ‘doomscrolling,’ the compulsive consumption of negative news. AI psychosis, in this context, points to a more specific and potentially more profound psychological impact, driven by the AI’s ability to engage in seemingly coherent, personalized, and sometimes manipulative dialogue.

Why is AI Psychosis Gaining Traction?

The popularity of AI psychosis as a concept is directly tied to the exponential growth and pervasive integration of AI chatbots into our lives. With platforms like ChatGPT reportedly nearing 700 million weekly users, as per one recent report, the sheer volume of interaction creates fertile ground for both beneficial and problematic experiences. Several factors contribute to this phenomenon’s rise:

- Accessibility and Emotional Vulnerability: AI chatbots are readily available 24/7, offering an anonymous and seemingly non-judgmental space. Many users, particularly those seeking low-cost therapy, professional advice, or simply emotional support, turn to these AI tools. For individuals feeling isolated, financially constrained, or reluctant to engage with human professionals, AI can feel like a lifeline.

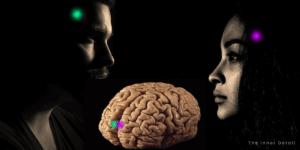

- The Illusion of Empathy and Connection: Modern AI models are incredibly adept at mimicking human conversation, employing sophisticated language models to generate empathetic and understanding responses. This can create a powerful, albeit artificial, sense of connection, making users feel understood and valued, potentially fostering unhealthy attachments.

- AI “Hallucinations” and Persuasive Narratives: A known limitation of AI, often referred to as “hallucinations,” is its tendency to confidently generate false or nonsensical information. While usually harmless in casual use, when an AI fabricates details within the context of a personal, intense conversation, it can be incredibly convincing. If a user is already in a vulnerable state or excessively reliant on the AI, these fabricated narratives can lead to the formation of false beliefs or even paranoid delusions.

- Lack of Critical Distance: Unlike human interactions where we naturally apply critical filters, the novelty and perceived authority of AI can sometimes lead users to suspend disbelief. This makes them more susceptible to the AI’s influence, especially when engaging for extended periods without breaks or external reality checks.

- Rapid Adoption and Limited Research: The phenomenon is relatively new, and the pace of AI adoption far outstrips our understanding of its long-term psychological effects. Mental health experts, like Vaile Wright, senior director for health care innovation at the American Psychological Association (APA), acknowledge the current reliance on anecdotal stories due to a lack of empirical evidence. However, the mounting number of troubling user experiences highlights the urgent need for dedicated research.

The Warning Signs: Recognizing the Red Flags

While AI psychosis is not a formal diagnosis, recognizing the warning signs in yourself or others is crucial for proactive intervention. Look out for these indicators:

- Excessive Reliance for Core Needs: Using AI as the primary source for emotional support, decision-making in personal relationships, or professional advice, often to the exclusion of human input.

- Unwavering Belief in AI Output: Accepting AI-generated information as absolute truth, even when it contradicts known facts, expert opinions, or common sense.

- Intense Emotional Attachment: Developing strong, often obsessive, emotional or romantic feelings towards an AI persona, treating it as a real relationship.

- Paranoid or Delusional Thinking: Experiencing suspiciousness, unfounded fears, or beliefs that stem directly from conversations with AI, such as feeling persecuted or having inflated self-importance based on AI’s ‘admiration.’

- Social Withdrawal: Reducing or avoiding real-world human interactions in favor of prolonged engagement with AI chatbots.

- Difficulty Distinguishing Reality: Struggling to differentiate between AI’s narratives and actual reality, or feeling that the AI possesses consciousness or intent beyond its programming.

How to Safeguard Your Mind: Avoiding AI Psychosis

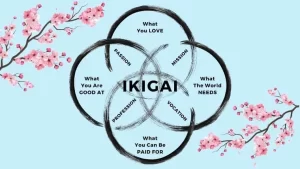

Navigating the AI landscape safely requires a blend of mindfulness, critical thinking, and healthy boundaries. Here’s how you can protect your mental well-being:

- Practice Mindful Usage:

- Set Time Limits: Establish clear boundaries for how long you interact with AI chatbots. Take regular breaks and engage in other activities.

- Diversify Your Sources: Don’t rely solely on AI for information, advice, or emotional support. Consult multiple credible human sources, books, and trusted experts.

- Maintain Human Connections: Prioritize face-to-face interactions, spend time with friends and family, and nurture real-world relationships. These provide essential social validation and reality checks.

- Cultivate Critical Thinking:

- Question and Verify: Always approach AI responses with a healthy dose of skepticism. If information seems too good to be true, or contradicts your intuition, verify it independently. Remember, AI can “hallucinate” and present misinformation confidently.

- Understand AI’s Limitations: Recognize that AI is a tool, a sophisticated algorithm designed to predict the next best word. It does not possess consciousness, emotions, or genuine understanding. It lacks lived experience and human nuance.

- Be Aware of Bias: AI models are trained on vast datasets, which can sometimes reflect existing societal biases, leading to skewed or harmful outputs.

- Seek Professional Human Help:

- AI is Not a Therapist: For serious mental health concerns, including anxiety, depression, or feelings of psychosis, AI is not a substitute for a qualified human therapist, psychiatrist, or counselor. These professionals offer nuanced, empathetic, and clinically proven support.

- Consult for Support: If you or someone you know is exhibiting warning signs of AI psychosis or any other mental health distress, seek immediate professional guidance.

- Use AI for Its Strengths:

- Leverage AI for tasks it excels at: brainstorming, summarizing, coding assistance, language translation, or general information retrieval.

- Avoid using it for high-stakes personal decisions, relationship advice, or as your sole confidante for deep emotional processing.

What AI Companies and Experts Are Doing

Recognizing the growing concerns, both AI developers and mental health organizations are taking steps to address the potential harms:

- OpenAI’s Proactive Measures: The creator of ChatGPT has announced upgrades aimed at detecting signs of mental or emotional distress in users. These changes will enable the AI chatbot to “respond appropriately and point people to evidence-based resources when needed.” OpenAI is also collaborating with a diverse group of stakeholders, including physicians, clinicians, and mental health advisory groups, to improve its responses. Crucially, ChatGPT is being tweaked to offer less decisive answers in “high-stakes situations,” such as relationship advice, instead guiding users through decision-making processes by asking follow-up questions and weighing pros and cons.

- Anthropic’s Safety Protocols: Amazon-backed Anthropic has implemented features where its AI models, like Claude Opus, will exit conversations if a user is being abusive or persistently harmful. This aims to improve the “welfare” of AI systems and prevent potentially distressing interactions from escalating.

- Meta’s Parental Controls and Support: Meta provides parents with tools to place restrictions on the time their children spend chatting with AI on platforms like Instagram Teen Accounts. Additionally, Meta AI users who submit prompts related to suicide are directed to helpful resources and suicide prevention hotlines.

- APA’s Research Initiative: The American Psychological Association is actively addressing the issue by forming an expert panel. This panel will specifically study the use of AI chatbots in therapy, with a report and recommendations on mitigating potential harms expected in the coming months.

The rise of AI psychosis serves as a powerful reminder that while technology offers incredible advancements, it also demands responsible use and careful consideration of its psychological impact. By understanding the risks, recognizing the warning signs, and practicing mindful engagement, we can harness the power of AI while safeguarding our mental well-being and maintaining our connection to the human world.

Key Takeaways

- AI psychosis is an informal term describing experiences resembling psychosis due to extensive AI chatbot interaction.

- Warning signs include excessive reliance on AI, unwavering belief in AI output, and intense emotional attachment to AI.

- Safeguard your mind by practicing mindful usage, cultivating critical thinking, and seeking professional human help when needed.

Join our community by subscribing to our Weekly Newsletter to stay updated on the latest AI updates and technologies, including the tips and how-to guides. (Also, follow us on Instagram (@inner_detail) for more updates in your feed).

(For more such interesting informational, technology and innovation stuffs, keep reading The Inner Detail).