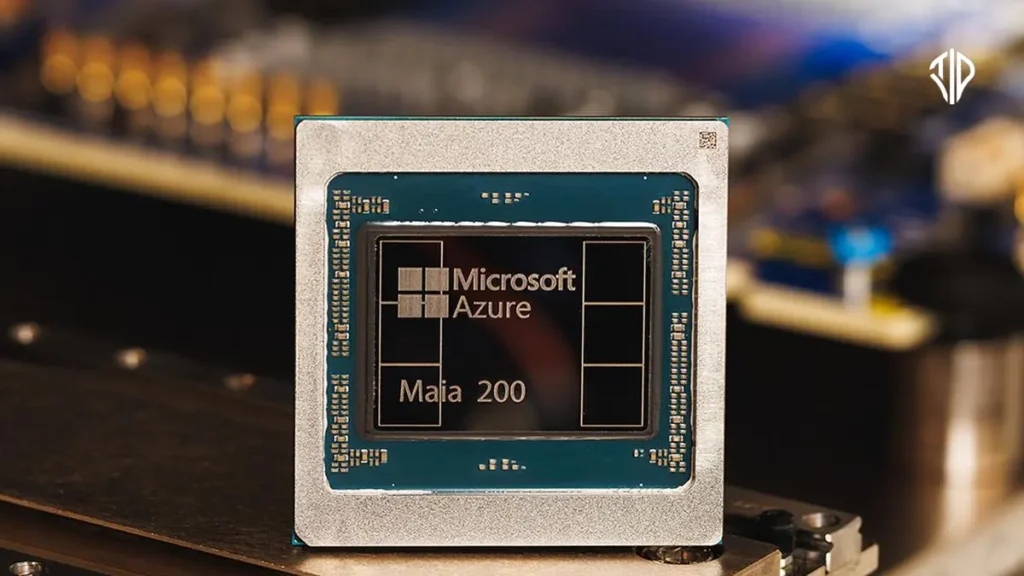

Microsoft has introduced Maia 200, its first custom AI Chip designed specifically for artificial intelligence inference workloads. This strategic move aims to optimize performance and efficiency for the company’s vast AI operations, directly challenging the dominance of established hardware providers like Nvidia.

In the world of high-performance computing, specialized chips are the engine behind groundbreaking advancements. Just as a Formula 1 race car is meticulously engineered for speed and agility on the track, distinct from a robust off-road vehicle designed for endurance across varied terrains, AI workloads demand hardware tailored to their unique processing needs. This specialization is precisely why tech giants are now innovating beyond general-purpose solutions.

For years, the burgeoning field of artificial intelligence has largely relied on graphics processing units (GPUs) originally designed for visual rendering. While powerful, these off-the-shelf solutions, primarily from Nvidia, weren’t always perfectly optimized for the nuanced demands of AI. This presented a clear opportunity for companies with massive AI infrastructure, like Microsoft, to forge their own path.

The Dawn of Custom AI Silicon

The landscape of artificial intelligence is experiencing an unprecedented boom, from powering sophisticated language models to generating vast quantities of synthetic data. At the heart of this revolution lies computational power, traditionally supplied by market leaders like Nvidia whose GPUs have become the de facto standard for both training and deploying AI models.

However, as AI workloads become more specific and demanding, leading tech companies are realizing the immense benefits of developing their own custom silicon.

This shift allows for hardware precisely engineered for their unique software ecosystems, enabling greater efficiency, superior performance per dollar, and enhanced control over their critical infrastructure. Microsoft’s introduction of Maia 200 marks a significant step in this strategic direction, signaling its commitment to self-sufficiency and innovation in the fiercely competitive AI hardware race.

Introducing Maia 200: An Inference Powerhouse

Maia 200 is an AI accelerator meticulously built for inference, which refers to the process of running an already trained AI model to make predictions or generate content. Think of it as the brain behind real-time AI applications, from powering your Copilot queries to generating text for OpenAI’s advanced models.

Here’s a closer look at what makes Maia 200 special:

- Cutting-Edge Fabrication: Fabricated on TSMC’s advanced 3-nanometer process, each Maia 200 chip boasts an astonishing over 140 billion transistors. This intricate design packs immense computational power into a compact footprint.

- Specialized Tensor Cores: The chip incorporates native FP8/FP4 tensor cores. These are specialized processing units highly optimized for the matrix multiplication operations that are fundamental to AI computations, particularly for lower-precision calculations (8-bit and 4-bit floating point) which are increasingly common in efficient AI models.

- Redesigned Memory System: To combat data bottlenecks, Maia 200 features a sophisticated memory architecture including 216GB of HBM3e (High Bandwidth Memory) with a massive 7 TB/s bandwidth, along with 272MB of on-chip SRAM. This ensures that massive AI models are constantly fed with data at high speeds, crucial for real-time inference.

- Exceptional Performance: Maia 200 delivers over 10 petaFLOPS in 4-bit precision (FP4) and over 5 petaFLOPS of 8-bit (FP8) performance, all while operating within a 750W SoC TDP envelope. In practical terms, this means it can effortlessly run today’s largest models and has ample headroom for future, even more complex AI systems.

- Efficiency Advantage: Microsoft claims Maia 200 offers a significant 30% better performance per dollar compared to its existing fleet of latest-generation hardware. This translates directly into substantial cost savings for running large-scale AI services.

Benchmarking Maia 200 against other hyperscaler-developed chips reveals its prowess. Microsoft highlights that Maia 200 delivers three times the FP4 performance of the third-generation Amazon Trainium and boasts superior FP8 performance compared to Google’s seventh-generation TPU. This firmly positions Maia 200 as a leader in custom AI inference silicon.

Driving Microsoft’s AI Ambitions

Maia 200 is not just a technological marvel; it’s a strategic asset for Microsoft’s extensive AI ecosystem. It is designed to serve a multitude of critical models and applications:

- OpenAI Integration: Maia 200 will power the latest GPT-5.2 models from OpenAI, reinforcing Microsoft’s partnership and commitment to pushing the boundaries of generative AI.

- Microsoft 365 Copilot: The chip will enhance the performance per dollar for Microsoft 365 Copilot, bringing more efficient AI assistance to millions of users globally.

- Internal AI Development: Microsoft’s Superintelligence team will leverage Maia 200 for synthetic data generation and reinforcement learning, accelerating the improvement of next-generation in-house AI models. Its unique design is particularly adept at rapidly generating and filtering high-quality, domain-specific data, thereby feeding training pipelines with fresher, more targeted signals.

- Global Deployment: Maia 200 is already operational in Microsoft’s US Central datacenter region near Des Moines, Iowa, with deployment in the US West 3 datacenter region near Phoenix, Arizona, next in line, and future global expansion planned.

Engineering for Cloud-Scale Efficiency

Beyond raw processing power, the effectiveness of an AI accelerator lies in its ability to function seamlessly within a massive cloud environment. Maia 200 addresses this with innovative system-level optimizations:

- Advanced Memory Subsystem: Recognizing that FLOPS alone aren’t enough, Maia 200 focuses on feeding data efficiently. Its memory subsystem is centered on narrow-precision datatypes, a specialized DMA (Direct Memory Access) engine, on-die SRAM, and a specialized NoC (Network-on-Chip) fabric for high-bandwidth data movement. This ensemble significantly increases token throughput, a critical metric for generative AI.

- Scalable Network Design: Maia 200 introduces a novel, two-tier scale-up network built on standard Ethernet. This architecture, combined with a custom transport layer and tightly integrated NIC (Network Interface Card), ensures high performance, strong reliability, and significant cost advantages without proprietary fabrics. Each accelerator offers 2.8 TB/s of bidirectional, dedicated scale-up bandwidth and supports predictable, high-performance collective operations across clusters of up to 6,144 accelerators.

- Reduced Total Cost of Ownership (TCO): This optimized system architecture delivers scalable performance for dense inference clusters while simultaneously reducing power usage and overall TCO across Azure’s global fleet. A unified fabric simplifies programming, improves workload flexibility, and reduces stranded capacity, maintaining consistent performance and cost efficiency at cloud scale.

A Cloud-Native Development Approach

Microsoft’s development philosophy for Maia 200 was inherently cloud-native, integrating the silicon from the ground up with the Azure ecosystem.

A sophisticated pre-silicon environment allowed for extensive modeling and optimization of computation and communication patterns, ensuring the chip, networking, and system software were co-developed as a unified whole long before physical silicon was ready.

This foresight extended to rapid datacenter integration. Complex elements like the backend network and a second-generation, closed-loop liquid cooling Heat Exchanger Unit were validated early.

Native integration with the Azure control plane provides security, telemetry, diagnostics, and management capabilities at both the chip and rack levels, maximizing reliability and uptime.

The result? AI models were running on Maia 200 silicon within days of its arrival, and the time from first silicon to datacenter deployment was cut by more than half compared to similar AI infrastructure programs.

The Future of AI is Multigenerational

The introduction of Maia 200 is not a one-off event but the beginning of a multi-generational AI accelerator program for Microsoft.

As Maia 200 rolls out across global infrastructure, future generations are already in design, promising continuous advancements in performance and efficiency for the most critical AI workloads.

To foster innovation and adoption, Microsoft is inviting developers, AI startups, and academics to explore early model and workload optimization with the new Maia 200 Software Development Kit (SDK).

The SDK includes a Triton Compiler, PyTorch support, low-level programming in NPL, and a Maia simulator with a cost calculator, enabling developers to optimize for efficiencies early in the code lifecycle.

The era of large-scale AI is truly just beginning, and the underlying infrastructure will define what’s possible. With Maia 200, Microsoft is taking a bold step to shape that future, ensuring its AI ambitions are powered by cutting-edge, custom-designed hardware.

Key Takeaways

- Microsoft’s Maia 200 is its first custom AI accelerator, challenging Nvidia’s dominance, specifically optimized for AI inference workloads.

- Built on TSMC’s 3nm process, it features specialized FP8/FP4 tensor cores, a redesigned memory system (216GB HBM3e), and delivers over 10 petaFLOPS (FP4) performance within 750W TDP.

- Maia 200 offers a claimed 30% better performance per dollar, powering OpenAI’s GPT-5.2, Microsoft 365 Copilot, and internal AI development for improved efficiency and cost savings.

- Its cloud-native design ensures seamless integration with Azure, featuring advanced networking (2.8 TB/s bidirectional bandwidth) and liquid cooling for scalable, efficient, and reliable cloud-scale inference.

- Maia 200 marks the beginning of a multi-generational AI accelerator program, with an SDK available for developers to optimize models for future AI advancements.

Join our community by subscribing to our Weekly Newsletter to stay updated on the latest AI updates and technologies, including the tips and how-to guides. (Also, follow us on Instagram (@inner_detail) for more updates in your feed).

(For more such interesting informational, technology and innovation stuffs, keep reading The Inner Detail).