Music has always been an integral part of human experience, offering solace, joy, and profound connection. This deep bond between music and the human brain, exemplified by global hits like “Despacito” and “Gangnam Style,” inspired researchers in the Netherlands and India. Their groundbreaking work led to the experimental design of an artificial intelligence (AI) system capable of identifying songs listeners are hearing solely by analyzing their brainwaves.

Unlocking Music Recognition with AI Brainwave Analysis

This pioneering study was a collaborative effort between researchers from the Human-Centered Design department at Delft University of Technology in the Netherlands and the Cognitive Science department at the Indian Institute of Technology Gandhinagar.

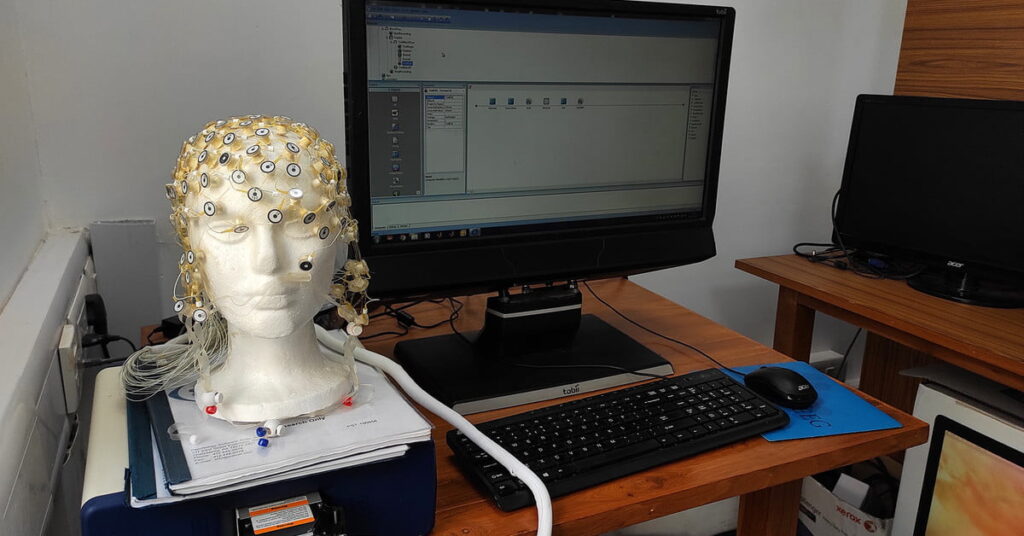

For the experiment, 20 participants were recruited and asked to listen to 12 different songs through headphones. To maximize focus, participants were placed in a dark room and blindfolded. An EEG (electroencephalogram) cap was worn by each individual to monitor their brain activity as they listened to the music.

The diverse selection of music included a mix of Western and Indian genres. To identify the heard songs, the collected brainwave data was correlated with known song patterns using an artificial neural network. When this advanced algorithm was rigorously tested on new, unseen data, it successfully identified the songs with an impressive 80% accuracy, purely from the participants’ brainwave signals.

“This method allowed us to build a more comprehensive and representative sample for both training and testing the AI model,” stated Krishna Miyapuram, assistant professor of cognitive science and computer science at the Indian Institute of Technology Gandhinagar. He added, “The effectiveness of our approach was strongly validated by achieving impressive classification accuracies, even when we utilized a smaller portion of the dataset for training.”

“Our findings clearly indicate that individuals experience music in highly personalized ways,” Miyapuram explained. “While it’s generally expected that brains process low-level or stimulus-level features similarly across individuals, when it comes to music, it appears that higher-level cognitive functions, particularly those related to enjoyment, are what differentiate individual experiences.”

The project’s ongoing objective is to thoroughly investigate and map the intricate relationship between specific EEG frequencies and various musical frequencies. This research aims to clarify whether a stronger aesthetic connection to music correlates with a higher degree of neural resonance. Essentially, the results suggest that individuals with a deeper appreciation for music exhibit stronger brainwave correlations, making song identification more accurate. This breakthrough could potentially allow us to predict a person’s enjoyment of a musical piece simply by analyzing their brainwaves, offering new insights into how humans initially seek out and engage with music.

Further Reading: AI Study Reveals Selfies Could Detect Heart Disease

The Future Potential of Brainwave Analysis and AI

Beyond immediate music identification, the short-term applications of this brainwave-reading technology include personalized music recommendations based on an individual’s neural responses. Experts like Lomas propose that in the medium term, this could evolve into powerful tools for understanding the “depth of experience” a person has with various media. Brain analysis tools could potentially predict an individual’s level of engagement while watching a movie or listening to an album. This brain-derived engagement data could then be leveraged to enhance immersive experiences. Imagine optimizing a film’s scene changes to maximize engagement for 90% of its audience, guided by real-time brainwave feedback.

Looking further ahead, approximately two decades from now, Lomas envisions the ultimate goal: “transcribing the contents of imagination into a viewable form – essentially, converting thoughts into text.” This ambitious objective represents a significant future frontier for brain-computer interfaces.

AI’s Capability in Reading Human Emotions

The concept of reading brainwaves is not entirely new within the technology sector, with various research initiatives exploring its applications for diverse purposes. One notable area includes the development of AI-powered robots designed to interpret human emotions. A dedicated study on ‘Emotional Intelligence’ revealed that robots could potentially assist humans in tasks by reacting or responding according to observed human emotions. You can explore the full details of this fascinating research here.

Related Posts:

- Gaming Headset Scans Brainwaves to Identify Weaknesses

- AI Recreates Ancient Sculptures and Statues: A Look at Artists’ Work

- Third Generation AI: Understanding Neuromorphic Computing

References:

- https://www.digitaltrends.com/features/ai-identifies-songs-by-reading-brain-waves/

- https://hitechglitz.com/ai-identifies-songs-you-hear-through-brain-waves/

Join our community by subscribing to our Weekly Newsletter to stay updated on the latest AI updates and technologies, including the tips and how-to guides. (Also, follow us on Instagram (@inner_detail) for more updates in your feed).

(For more such interesting informational, technology and innovation stuffs, keep reading The Inner Detail).