For years, “AI” in smartphones meant a few clever photo filters or a voice assistant that could barely set a timer. But in 2026, the industry has undergone a fundamental shift.

We’ve moved past isolated features and into the era of OS-level intelligence, where Large Language Models (LLMs) and on-device agents are the very heartbeat of the operating system.

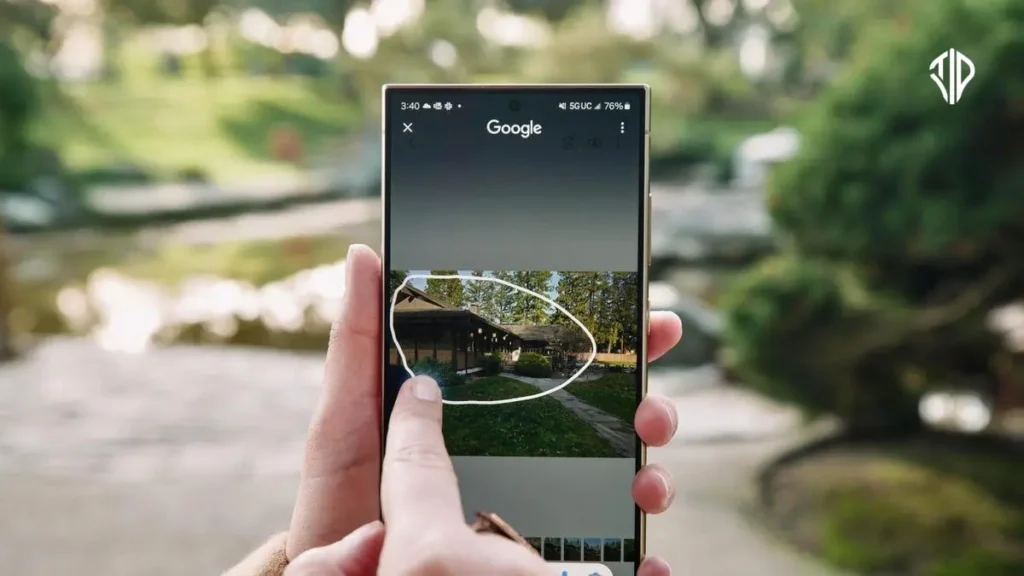

The smartphone is no longer just a portal to your apps; it is an active participant in your life, capable of seeing what you see and predicting what you need before you ask.

Smartphone Brands and Their Brains – AI Models

While the “Big Three” of Tech (Google, Apple, and Samsung) set the initial pace, the 2026 landscape is defined by specialized innovation from brands like Xiaomi, Oppo, and Realme, each bringing a unique “agentic” flavor to the table.

Google: The Home Field Advantage

Google’s Pixel lineup remains the “gold standard” for AI integration. With Gemini 2.5 Pro and Gemini Nano baked into the core, the 2026 Pixel experience is dominated by Gemini Live. It’s a native voice experience so fluid it feels like talking to a human.

Furthermore, the integration of Veo has turned the Pixel into a miniature Hollywood studio, allowing for AI-powered filmmaking that can generate cinematic b-roll or lighting adjustments on the fly.

Apple: Privacy-First Intelligence

Apple’s approach with Apple Intelligence on the iPhone 17 series relies on a sophisticated “Triple-Threat” model strategy. They use their own Apple Foundation Models for on-device privacy, but seamlessly hand off to Gemini or GPT-4o for high-level creative writing or complex coding.

Features like Genmoji and Image Playground have turned the iPhone into a playground for generative art, while the revamped Siri can finally perform actions across third-party apps with near-perfect accuracy.

Samsung: The Power of Choice

Samsung’s Galaxy AI is the most versatile. While it leverages Gemini and its own Samsung Gauss model, Samsung has introduced a “Choice of Agents” policy. For the first time, users can swap or combine Bixby with Gemini or even Perplexity for search-focused queries.

Their new “Now Nudge” feature uses on-device patterns to proactively suggest actions—like drafting a reply to a missed call based on your calendar availability.

Xiaomi: The “Human × Car × Home” Ecosystem

Xiaomi has moved beyond the screen with its HyperAI ecosystem, powered by their self-developed MiMo foundation model. Their standout feature, “Miloco” (Xiaomi Local Copilot), turns the phone into a command center for the entire home.

It uses AI to understand real-world context—like dimming the lights when it “sees” you’ve started a movie or triggering the robot vacuum when it detects clutter—all while keeping sensitive data processed locally on-device. Xiaomi is also working on its new AI assistant named “MiClaw” which can automate your smartphone tasks. MiClaw is build upon its MiMo AI model and also supports MCP, allowing for numerous automation opportunities.

Oppo & Vivo: Multimodal Visionaries

Oppo and Vivo have focused heavily on Omni, a full-modal AI model that supports simultaneous voice, video, and text input. This allows for true “live scene understanding,” where you can point your camera at a street in a foreign city and ask the AI for directions or a translation in real-time.

Their AI Portrait Glow feature has also redefined mobile photography by using AI to reconstruct studio-grade lighting in low-light environments.

Realme: The Personalization Pro

Realme caters to a younger, creator-focused demographic with AI Edit Genie. Their standout “AI StyleMe” feature uses generative AI to suggest fashion and aesthetic personalizations for your UI and photos. Meanwhile, “AI Perfect Shot” acts as an invisible photography tutor, automatically optimizing camera settings and frames to ensure every shot looks like it was taken by a professional.

Smartphone AI Model Integration (as of 2026)

| Brand | Models Integrated | Primary AI Features |

|---|---|---|

| Gemini 2.5 Pro, Nano, Veo | Deep Research, Gemini Live, AI Filmmaking | |

| Apple | Apple Foundation, Gemini, GPT-4o | Writing Tools, Genmoji, Siri 2.0 |

| Samsung | Gemini, Samsung Gauss, Perplexity | Now Nudge, Real-time Translation, Multi-Agent support |

| Xiaomi | Xiaomi MiMo, Gemini, MiClaw | HyperAI: Miloco (Local Home Control), AI Expansion |

| Oppo/Vivo | Omni (Full-modal), Gemini | Omni Model: Live scene understanding, AI Portrait Glow |

| Realme | Google Gemini | AI Edit Genie: StyleMe & Perfect Shot camera tools |

Key Trends Defining the “Agentic” Era

- The Hybrid Model (On-Device + Cloud): Privacy is the new luxury. Gemini Nano and Apple’s local models handle your private data locally on the chip, while cloud-based models like GPT-4o handle heavy-duty reasoning.

- Multimodal interaction: The newest Omni and Gemini 2.5 models allow you to point your camera at something and have a live voice conversation with the AI about what it sees in real-time.

- From Apps to “Actions”: On-device agents can now reach into your apps to execute tasks, like booking a flight and updating your calendar with a single command.

The Future in Your Pocket

In 2026, the “smart” in smartphone has finally caught up to the name. We are no longer using tools; we are collaborating with agents. Whether you prefer the open, agent-swapping flexibility of Samsung, the seamless ecosystem of Google, or the privacy-hardened walls of Apple, one thing is clear: your next phone won’t just be a screen—it will be a second brain.

Join our community by subscribing to our Weekly Newsletter to stay updated on the latest AI updates and technologies, including the tips and how-to guides.

(Also, follow us on Instagram (@tid_technology) for more updates in your feed and our WhatsApp Channel to get daily news straight to your Messaging App).