Tech giants are heavily investing in generative AI chatbots and rapidly integrating them across various platforms. These tools, designed to summarize information and automate analysis, are becoming a primary way users interact with the internet. But as the popularity of AI grows, questions surrounding their accuracy and overall trustworthiness have become critical, especially when they handle information drawn from reliable sources.

A recent comprehensive study sheds light on which models are struggling the most and why users should maintain a healthy degree of skepticism.

45% of AI Answers has Errors

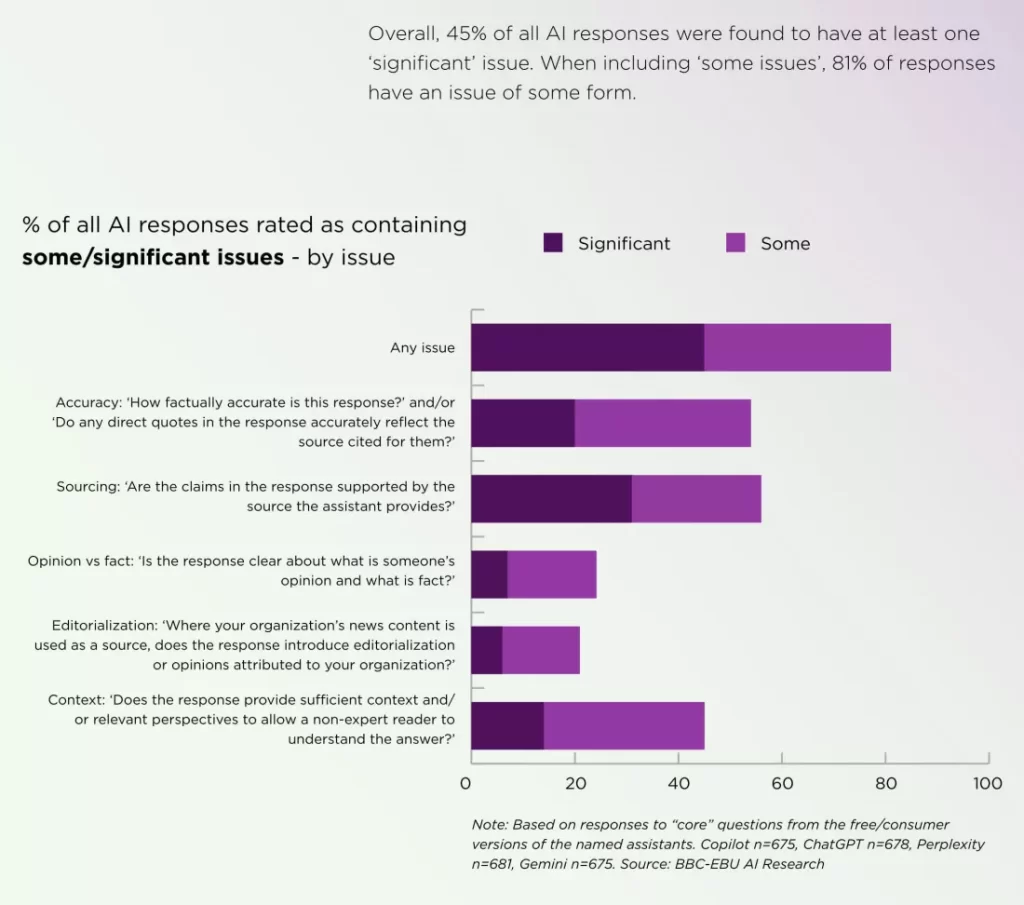

Despite years spent minimizing “hallucinations,” AI developers still have a long way to go, assuming the problem is even solvable. Analysis conducted by the BBC and 22 public media organizations across 18 countries and 14 languages revealed potentially severe implications regarding AI accuracy.

When queried about specific news stories, AI chatbot responses based on news articles were found to contain errors about 45 percent of the time. These issues persisted across different regions and languages. While this does show some improvement—the portion of responses with serious errors fell from 51 percent in an earlier study to 37 percent in the more recent analysis—the overall rate of inaccuracies remains significantly high.

Which Models Lead and Which Lag?

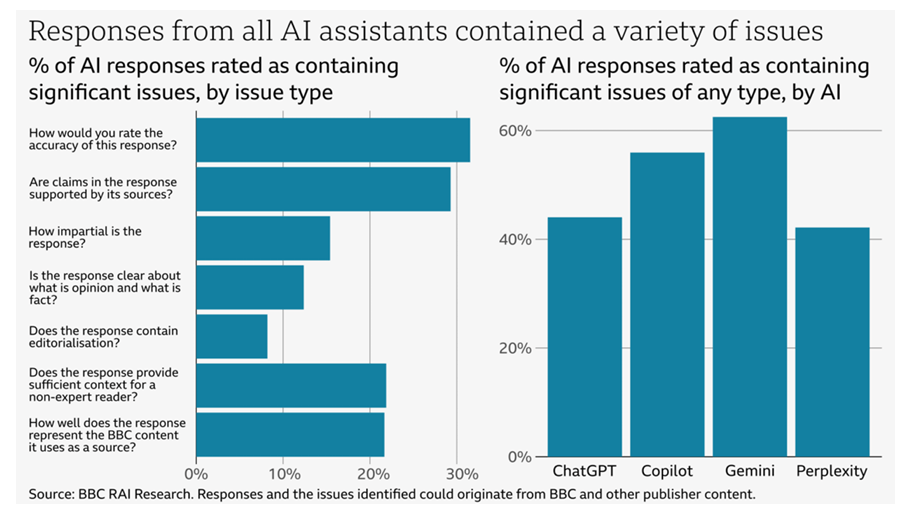

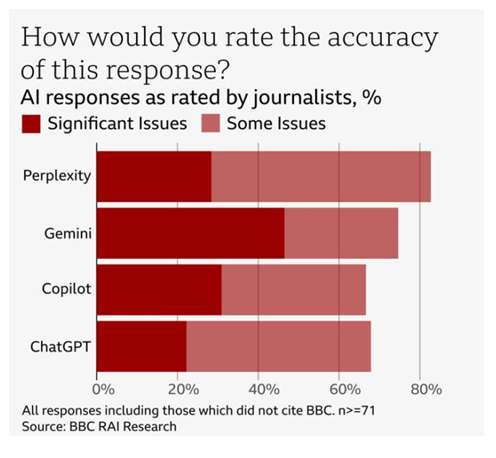

When examining specific AI models, notable differences in reliability emerged, providing crucial insight into which tools might be considered more (or less) trustworthy in their current state.

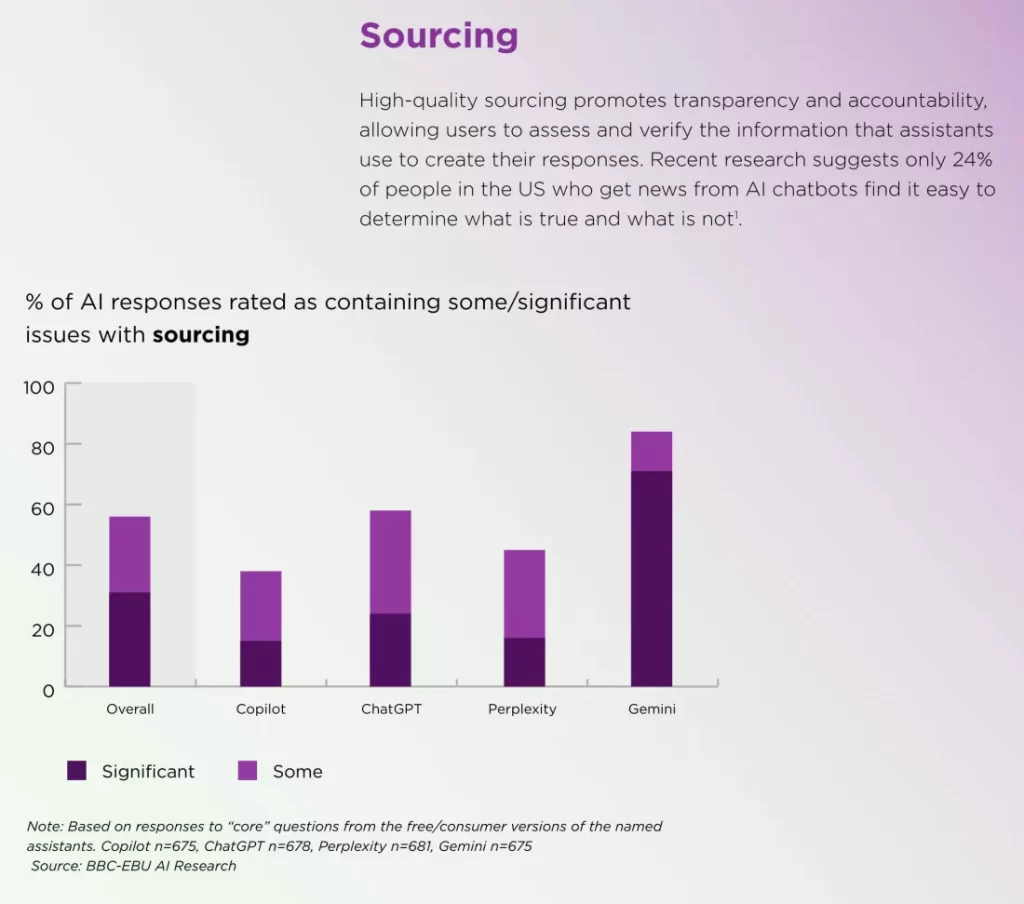

The study compared several leading models, including Google’s Gemini, OpenAI’s ChatGPT, Microsoft’s Copilot, and Perplexity. The data clearly indicates that Google’s Gemini significantly lagged behind its competitors. Gemini was found to be far less accurate than ChatGPT, Copilot, and Perplexity. Specifically, Gemini demonstrated significant sourcing errors in 72 percent of its responses.

The errors observed today should theoretically not be happening, as modern versions of models like ChatGPT are no longer limited to older training data (like information only up to September 2021) and now have access to the live internet.

The persistence of these inaccuracies suggests that the issue may be inherent in the underlying algorithms, meaning the problem may not be easily fixed.

Beyond Factual Errors: The Nuance Problem

The trustworthiness of an AI model is not only judged by simple factual mistakes but also by its ability to handle nuance, context, and accurate attribution. The analysis found various types of issues with AI-generated output:

- Sourcing Challenges: Sourcing was identified as the biggest challenge. Chatbots frequently provided links that did not correspond to the sources they cited.

- Contextual Confusion: Even when models accurately referenced material, they often failed to distinguish opinion from fact or differentiate satire from regular news.

- Outdated Information: Chatbots can be slow to update information on political figures and leaders. For example, ChatGPT, Copilot, and Gemini incorrectly named the current German chancellor and NATO’s secretary-general.

- Factual/Date Misattribution: In a striking example of complex error, ChatGPT, Copilot, and Gemini incorrectly stated that Pope Francis is the current Pope, claiming he was succeeded by Leo XIV. Even more concerningly, Copilot accurately reported Pope Francis’s date of death while simultaneously describing him as the current Pope.

The Unsettling Impact on Public Trust

Despite the evidence showing that AI chatbots struggle significantly with accuracy, a concerning portion of the public still trusts their answers. Researchers found that more than one-third of British adults trust AI to accurately summarize the news, a figure that rises to nearly half of adults under the age of 35.

This misplaced trust has serious implications for the credibility of news outlets. If an AI model misrepresents a news outlet’s content, 42% of adults would either blame both the original source and the AI or simply trust the original source less. If the known problems with accuracy and sourcing persist, the increasing popularity of generative AI tools could seriously damage the credibility of established news organizations.

Key Takeaways

- AI chatbots exhibit a significant error rate in summarizing news articles, with nearly half of responses containing inaccuracies.

- Google’s Gemini model notably lags behind competitors like ChatGPT, Copilot, and Perplexity in terms of accuracy and sourcing.

- Beyond factual errors, AI models struggle with nuance, context, and accurate attribution, leading to issues like sourcing challenges and outdated information.

- A concerning portion of the public trusts AI-generated summaries, potentially impacting the credibility of established news organizations.

Join our community by subscribing to our Weekly Newsletter to stay updated on the latest AI updates and technologies, including the tips and how-to guides.

(Also, follow us on Instagram (@inner_detail) for more updates in your feed and our WhatsApp Channel to get daily news straight to your Messaging App).